AI announcements have accelerated faster than most enterprise learning roadmaps. New models launch every quarter. Vendors promise personalization, automation, and instant content creation. Yet inside many organizations, the LMS still behaves like a compliance tracker with better graphics.

The next shift isn’t about adding another experience layer. It’s about embedding intelligence into the system (AI-powered LMS) that governs training itself - how learning adapts in real time, how compliance risk is flagged early, and how interventions trigger inside business workflows.

Here we explain what an AI LMS actually is, how it differs from traditional systems, which features matter in 2026, and how to evaluate vendors without buying into surface-level AI claims. It gives you a practical lens for a smarter buying decision.

What Is an AI Learning Management System?

An AI Learning Management System (AI LMS) is a learning platform that uses machine learning models and data intelligence to dynamically plan, deploy, and measure training based on real-time signals from your workforce and business systems.

It does not simply host courses or track attendance. It analyzes performance data, assessment patterns, behavioral engagement, compliance exposure, and role requirements to continuously adjust learning journeys.

For most large enterprises, the traditional LMS was built for a different era. Its primary mandate was coverage and compliance: assign training, document completion, and produce audit trails. That model worked when skill cycles were longer and performance gaps surfaced slowly.

The difference becomes clearer when you look at the operating logic. A traditional LMS primarily publishes and assigns static courses, tracks completion and test scores, relies heavily on manual administrative workflows, reports mostly historical activity, and functions as a standalone learning portal.

In contrast, an AI-powered LMS generates adaptive learning journeys, models skill progression and predicts capability gaps, automates learning triggers based on real-time performance data, connects learning signals to business metrics, and embeds learning interventions directly into everyday workflow systems.

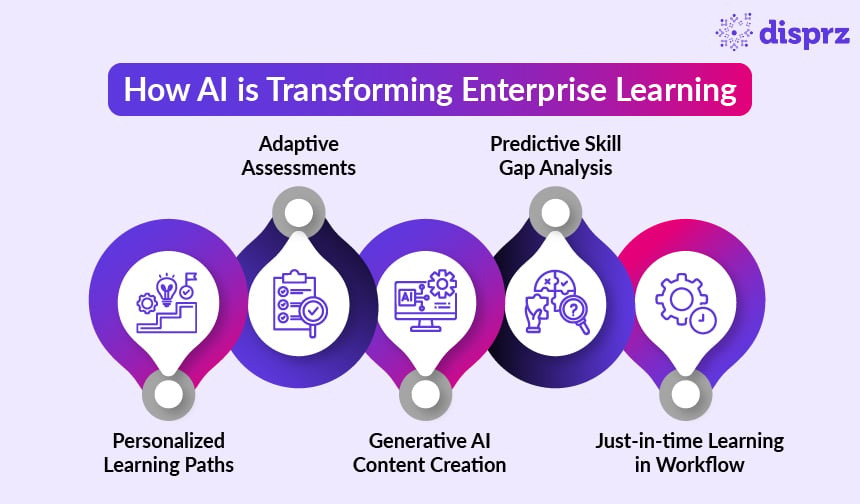

How AI Is Transforming Enterprise Learning

AI transforms enterprise learning only if it alters how your system responds to business movement. If conversion rates dip, compliance exposure rises, onboarding volumes spike, or a new product launches, your learning infrastructure should adjust without waiting for quarterly reviews or manual reassignment cycles.

The real test is simple: can the platform detect capability risk early and trigger precise intervention automatically? That shift from calendar-driven programs to signal-driven response is where transformation becomes tangible.

The impact shows up in a few operational areas that you should evaluate closely.

Personalized Learning Paths

If you are evaluating AI-driven personalization, start by questioning what “personalized” actually means in your context. In many platforms, it still translates to role-based assignment. Everyone under the same job code receives the same pathway. That may simplify administration, but it ignores performance variance.

True AI-driven personalization should respond to how your people actually perform.

In practice, that means:

- Learning sequences shift based on assessment depth, KPI trends, tenure, and prior certification history.

- Your top performers are not forced through foundational modules simply because of role mapping.

- Employees with measurable gaps receive targeted reinforcement aligned to those gaps.

- Pathways update as new performance data flows into the system.

When you assess vendors, probe beyond the interface:

- Does the platform integrate with CRM, HRMS, or performance systems, or does it rely only on LMS activity?

- How often are recommendations recalibrated?

- Can you see the logic behind a recommendation?

If the system cannot differentiate two employees in the same role based on real performance signals, personalization will remain surface-level. If it can, learning time begins to align with actual business variance.

Adaptive Assessments

If you are still relying on static quizzes, you are validating attendance more than capability. Adaptive assessment models change what you can actually see.

In an AI-enabled system:

- Question difficulty shifts in real time based on how an employee performs.

- Scenario depth increases when proficiency is demonstrated and narrows when weaknesses appear.

- Identified gaps automatically reshape the learning pathway instead of triggering blanket retraining.

This gives you sharper diagnostic data. Instead of retraining the entire workforce, you can concentrate effort where exposure or performance variance is measurable.

Generative AI Content Creation

Generative AI lowers the cost and speed of content production. That alone is not transformational. The shift happens when generation is triggered by live business signals.

In a performance-aligned architecture:

- Product releases generate role-specific enablement automatically.

- Regulatory updates create geography-specific compliance refreshers.

- Identified skill gaps produce targeted micro-interventions within days, not months.

Speed alone is not enough. Without alignment to defined competencies and governance controls, content velocity creates sprawl. When aligned, content becomes responsive to live capability gaps.

Predictive Skill Gap Analysis

Most dashboards tell you what happened last month. Predictive models estimate where gaps are likely to surface next quarter.

An AI-driven system can analyze hiring plans, attrition trends, promotion velocity, and certification decay to forecast emerging shortages. This allows you to intervene before expansion, product launches, or regulatory shifts expose readiness gaps. Learning moves from reactive support to forward-looking capability planning.

Just-in-Time Learning in Workflow

The historical weakness of enterprise learning is separation from execution. Employees move out of core systems into training portals, and the connection to real work weakens.

AI-enabled platforms integrate with operational systems so that learning triggers align with performance signals:

- A stalled deal in CRM prompts targeted objection-handling reinforcement.

- A role transition recorded in HR systems initiates contextual onboarding.

- Operational deviations trigger focused safety or compliance refreshers.

Learning shifts from scheduled programming to embedded intervention, often supported by The time between signal and response narrows, increasing the likelihood of application where it matters most.

AI LMS vs Traditional LMS (Side-by-Side Comparison)

Whether your current LMS is modern or mature, it was likely designed around structured programs, defined curricula, and clear reporting lines. That model works well when stability is high. It becomes strained when roles evolve quickly and performance signals shift mid-cycle.

An AI LMS operates with a different logic. It treats learning as a dynamic layer connected to live data, not as a fixed catalog delivered on schedule.

Here is how that difference shows up operationally:

| AI LMS | Traditional LMS |

|---|---|

| Learning journeys that adjust as performance and assessment data change | Courses designed and assigned in defined sequences |

| Automated triggers based on role transitions, risk indicators, or KPI movement | Admin-configured enrollment rules and reminder workflows |

| Analytics focused on skill progression and performance correlation | Reporting centered on completion and scores |

| Recommendations differentiated by individual proficiency and performance patterns | Uniform curricula within roles or levels |

In a traditional structure, you design programs carefully, map them to roles, and monitor participation. Adjustments happen in defined cycles — quarterly, annually, or after major reviews.

In an AI-driven structure, adjustments can happen continuously. A promotion, a performance dip, or a product shift recalibrates what an employee sees next. The system responds to signals rather than waiting for a new program rollout.

Why this matters for enterprise L&D teams

It matters because the system you run shapes the kind of conversations you can have with the business. If your LMS is built around courses and completion, you can prove coverage but not capability movement. When performance gaps surface, you cannot easily show whether training influenced the outcome.

If your platform tracks skill progression and responds to live signals, you gain visibility into where readiness is slipping and can intervene earlier. That difference affects how L&D is perceived, either as a reporting function or as a partner managing capability risk.

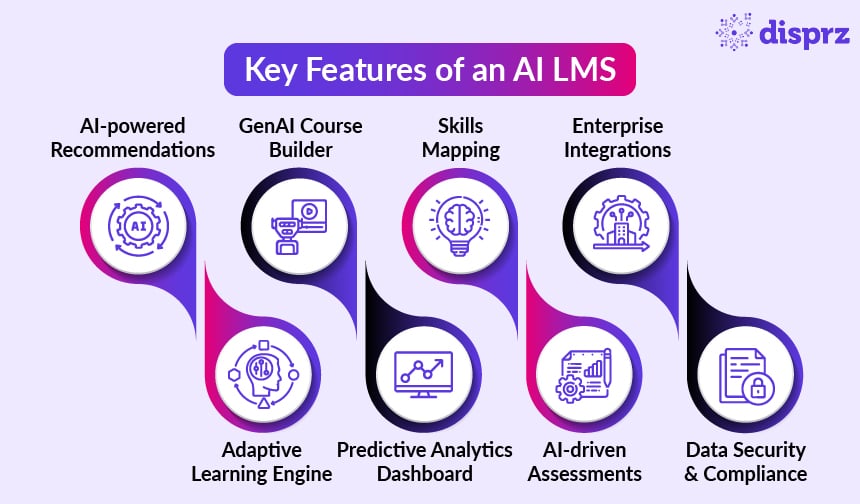

Key Features to Look for in an AI LMS in 2026

By 2026, most platforms will list similar AI features. The difference will not be in feature presence, but in how deeply those capabilities influence learning decisions, workflows, and data integrity.

Below is what each capability should actually do in an enterprise context.

AI-Powered Content Recommendations

At a surface level, recommendations are driven by job role, department, or previously completed courses. That approach improves discoverability but does not change capability allocation.

A more mature system should:

- Adjust recommendations based on demonstrated proficiency, not just role mapping.

- Incorporate signals such as assessment performance, time in role, and performance indicators.

- Update suggestions dynamically when those signals shift.

- Provide transparency into why a recommendation appears.

For example: If two employees share the same title but one consistently underperforms on negotiation metrics in CRM data, the system should differentiate their learning path. If it cannot, it is operating on static tagging logic.

The test: if performance changes tomorrow, will recommendations change automatically?

Adaptive Learning Engine

Many platforms say they are leveraging adaptive learning because they use branching method for assessments. For example:

- If a learner scores below 70%, assign Module B.

- If they score above 90%, unlock Advanced Module C.

That is conditional logic. It is preconfigured. It does not evolve unless someone manually changes the rules.

A true adaptive learning platform behaves differently:

- It recalculates proficiency as more data accumulates.

- It adjusts difficulty continuously, not just once after a test.

- It detects patterns across multiple interactions, not a single score.

- It modifies sequencing based on trend, not one threshold.

For example: If a sales rep consistently handles discovery well but struggles with margin control across three scenarios, the system increases exposure to pricing simulations — even if overall test scores remain high.

That is adaptivity. The distinction matters because conditional branching scales administration but adaptive modeling scales decision quality. If the system cannot adjust without predefined rules, it is structured automation and not adaptive intelligence.

Generative AI Course Builder

Generating slides and quizzes is easy. The real risk is uncontrolled volume.

A credible generative layer should:

- Tie every generated asset to a defined competency.

- Maintain version control for compliance training.

- Allow human review before deployment.

- Trigger content creation based on identified skill gaps and not just prompts.

For example: If a new product feature launches, the system should generate role-specific enablement modules aligned to sales objections, implementation steps, and support workflows and not a generic summary deck.

Predictive Analytics Dashboard

Most dashboards tell you what happened last month. That is useful for reporting. It does not help you plan.

Predictive capability means:

- Modeling certification expiry risk before deadlines are missed.

- Estimating skill shortages based on hiring velocity and attrition.

- Forecasting readiness gaps tied to expansion plans.

- Detecting declining proficiency trends before performance reviews surface them.

For instance: If attrition is high among certified engineers and new hires are entering slower than planned, the system should estimate when coverage drops below safe thresholds. That shifts learning from reactive correction to risk management.

Skills Mapping & Competency Tracking

Many systems store skills as metadata tags. That does not make them operational.

A useful skills framework should:

- Define proficiency levels clearly for each role.

- Update skill levels automatically based on assessment and performance signals.

- Show progression trends over time.

- Reveal cross-role capability visibility for workforce mobility.

If skills do not change when performance data changes, they are static labels. The key question: can you quantify skill movement quarter over quarter?

AI-Driven Assessments

Most enterprise LMS assessments still measure recall in terms of multiple-choice, pass mark and recording completion. That tells you who clicked through correctly but does not tell you whether they can apply the skill under pressure.

AI-driven assessment should change three things:

1. What is measured

Instead of only asking knowledge questions, the system should include applied scenarios — decision-based simulations, open responses, role-play style prompts. If someone selects the correct theory but consistently chooses poor actions in simulations, the system should detect that discrepancy.

2. How proficiency is calculated

Proficiency should not reset after one test. The system should accumulate evidence across multiple interactions. If someone passes once but regresses in later simulations, their skill level should adjust downward.

3. What happens next

Assessment outcomes should automatically reshape the pathway. If a manager repeatedly mishandles conflict scenarios, the system should assign deeper practice modules — without waiting for manual review.

If scores are stored only for reporting, the loop is broken. Assessment becomes documentation, not decision input.

Enterprise Integrations (HRMS, CRM, Operational Systems)

Integration is often misunderstood. Most systems connect to HR or CRM to sync employee data and export reports. That keeps records accurate. It does not change how learning responds.

What actually matters is whether business events can trigger learning automatically.

For example:

- When HR records a promotion, leadership transition learning is assigned instantly.

- When CRM data shows a sustained drop in win rates, targeted sales reinforcement is deployed.

- When a safety incident is logged in an operational system, refresher training is triggered for the relevant team.

If someone has to manually notice the issue and assign training, the system is connected but not responsive.

The real difference is simple: Does business data just inform reporting, or does it trigger learning action?

Data Security & Compliance

When AI starts recommending learning paths, adjusting proficiency levels, or triggering remediation, it is influencing employee development decisions. That raises governance stakes.

Security is not only about encrypting data or controlling login access. It also means controlling how AI makes decisions and how those decisions are tracked.

In practice, this includes:

Access control

- Can you restrict who sees performance-linked learning data?

- Are manager-level insights separated from executive-level analytics?

Auditability

- Can you track what content was generated, when, and by whom?

- Can you review changes to AI-generated assessments?

Explainability

- If the system recommends remediation or flags proficiency risk, can you see why?

- Is the decision traceable to assessment data or performance signals?

If your system cannot show the logic, for example, “based on these assessment scores” or “based on this certification expiry rule”, you have a governance problem.

So when we talk about data security in an AI LMS, it includes:

- Being able to explain why a decision was made.

- Being able to trace what data triggered it.

- Being able to show when and how content was assigned.

Without that visibility, AI-driven automation creates operational risk instead of reducing it.

Real Business Benefits of an AI-Powered LMS

If you’re evaluating AI in learning, the only question that matters is this: Does it change business performance, or does it just modernize training delivery?

An AI-powered LMS creates value when it shifts how capability is deployed, measured, and corrected. The impact shows up across five measurable dimensions.

1. Higher Completion

Completion increases when employees stop feeling over-assigned. In most enterprises, entire populations are enrolled in programs because it’s operationally simpler. High performers disengage. Underperformers comply without improving.

An AI-driven learning system reallocates learning exposure:

- Employees who demonstrate proficiency skip foundational modules.

- Those showing weakness receive deeper reinforcement earlier.

- Content load reduces for competent performers and intensifies where variance is measurable.

The effect is not just higher completion (often 20–30% improvement). It’s lower cognitive fatigue across your workforce. You reduce wasted learning hours. That matters financially. If 5,000 employees each avoid three hours of redundant training annually, that’s 15,000 hours returned to operations.

2. Reduced Administrative Overhead

Enterprise L&D teams often operate as coordination hubs:

- Enrollment configuration

- Certification tracking

- Reminder escalation

- Manual compliance audits

This administrative layer grows with scale. When AI automates trigger logic:

- Promotions assign role-transition learning automatically.

- Certification expiries trigger renewal pathways.

- Repeated assessment gaps assign remediation without manual review.

This majorly compresses the administrative effort and you reduce dependency on calendar cycles and spreadsheets. Governance becomes rule-based and automated, not manually supervised. That changes cost structure and frees senior L&D capacity for strategic planning.

3. Faster Onboarding

Traditional onboarding assumes a linear ramp. Everyone completes the same path. Performance variance emerges later.

AI-driven onboarding introduces early signal detection:

- Initial assessments map proficiency within the first weeks.

- Reinforcement deploys immediately if application gaps appear.

- Experienced hires accelerate without waiting for curriculum milestones.

The business impact is measurable:

- Reduced time-to-first-sale in commercial roles.

- Fewer quality defects in manufacturing onboarding.

- Lower compliance errors in regulated environments.

This is not about shortening onboarding content. It is about reducing time spent in low-productivity states.

4. Skills-Based Workforce Planning

Most workforce planning relies on FTE counts. That masks proficiency distribution. An AI LMS accumulates skill evidence continuously. Over time, you gain visibility into:

- Distribution of proficiency levels within a role.

- Concentration risk where only a few individuals operate at advanced levels.

- Certification decay trends before expiration.

This enables:

- Targeted upskilling rather than broad reskilling.

- Internal mobility decisions backed by measurable readiness.

- Reduced external hiring costs when capability gaps are narrow and trainable.

This way you move from counting roles to measuring depth.

5. Revenue and Risk Stabilization

The most strategic benefit is volatility reduction. In most organizations, capability gaps surface only after performance metrics decline:

- Sales targets missed.

- Audit findings reported.

- Safety incidents logged.

An AI-enabled system detects leading indicators:

- Declining assessment confidence scores.

- Repeated scenario misjudgments.

- Performance data drift inside CRM or operational systems.

When reinforcement deploys at that stage, before quarterly results expose the gap, you reduce performance swings. Over time, this stabilizes revenue conversion rates, compliance exposure and operational error frequency.

Use Cases of AI LMS Across Industries

The value of an AI LMS shows up differently depending on where skill gaps hurt the most. Below is a clear view of how it plays out across industries, not just at a training level, but at a business impact level.

| Industry | Typical Training Model | What AI Changes | Business-Level Effect |

|---|---|---|---|

| BFSI | Annual compliance programs; uniform certification cycles | Detects proficiency drift between cycles; targets remediation to high-risk units; triggers refreshers before expiry | Lower audit exposure, fewer regulatory escalations, tighter risk control |

| Retail | Seasonal product training; standardized frontline modules | Connects sales performance signals to skill reinforcement; differentiates high and low performers | Improved conversion consistency, better margin protection, faster frontline ramp |

| Manufacturing | Post-incident retraining; fixed safety refreshers | Identifies early deviation patterns in assessments; reinforces before incidents escalate | Reduced safety incidents, lower downtime cost, improved quality stability |

| SaaS | Quarterly product certification; static enablement paths | Links product updates to dynamic role-based reinforcement; aligns enablement to pipeline health | Faster deal velocity, stronger feature adoption, improved renewal performance |

| Healthcare | Mandatory recertification cycles; compliance-driven modules | Tracks protocol adherence patterns; deploys targeted refreshers before inspection or risk spikes | Lower compliance penalties, improved protocol accuracy, reduced patient risk |

What Changes Across All of Them

In every case, the shift is the same:

- Traditional model: Train broadly and review results later

- AI-driven model: Detect drift early and intervene precisely

How to Choose the Best AI LMS for Your Organization

If you are investing serious money in an AI LMS, the risk is not choosing the “wrong feature.” The risk is buying something that looks intelligent but behaves like a traditional LMS underneath.

Implementing an AI-based LMS involves several key steps to ensure optimal effectiveness in revolutionizing training processes.

1. Does it offer generative AI?

Almost every vendor now has generative AI. That is no longer differentiation. What you need to understand is:

- Can the system generate content aligned to your actual competency framework?

- Can you control what gets published and when?

- Is there version tracking for compliance-sensitive material?

- Can you see what was generated, edited, and deployed?

If AI can create modules instantly but you cannot trace or govern them, you are introducing operational risk. Especially in regulated industries. You want structured generation, not content explosion.

2. Is it truly adaptive or rule-based?

Many platforms say they are adaptive. In practice, they use predefined rules. Example of rule-based logic: “If score is below 70%, assign remediation.”

That works. But it does not learn. A stronger system should:

- Adjust proficiency levels based on patterns across multiple assessments.

- Detect repeated weakness in applied scenarios even if overall scores are high.

- Change sequencing as more performance data enters the system.

If someone improves over time or declines does the system notice and react automatically? If the answer is no, it’s structured automation and not adaptive intelligence.

3. Does it integrate with HR systems?

Most LMS platforms integrate with HR to create accounts. That is basic plumbing. What matters is whether business events trigger learning automatically.

For example:

- A promotion happens: Role-transition learning is assigned instantly

- Close rates decline in a region: Reinforcement activates for that segment

- A safety incident is logged: Refresher training deploys immediately

If learning still depends on someone noticing a dashboard and manually assigning training, your response time remains slow. The difference is does business data inform reports, or does it trigger action?

4. Is it enterprise scalable?

Scalability is not just about how many users the platform can support. It’s about whether the system can handle:

- Thousands of dynamically changing pathways

- Cross-region deployment

- Layered access controls

- Complex compliance structures

And equally important: Can your organization support it?

Adaptive systems require clean role definitions, competency mapping, and data discipline. Without that, even the best platform will produce noise.

5. Does it support a multilingual workforce?

If your workforce spans regions, AI must perform consistently across languages.

That means:

- Content generation must maintain meaning across languages, not just translate words.

- Performance analytics must remain accurate globally.

- Regional compliance rules must be respected in data handling.

If AI behaves differently across regions, you create inconsistency in capability development.

When you step back, the real decision comes down to this: Will this system meaningfully change how quickly your organization detects and responds to skill gaps?

If it does, it’s a structural upgrade. If it only makes training faster to produce, it's an incremental improvement at enterprise cost. That’s the lens worth applying before you commit the budget.

Why Disprz Is a Leading AI-Powered Enterprise LMS

Disprz is built around the idea that an enterprise learning platform should help you manage workforce capability continuously, not just distribute training. The platform combines AI-driven learning orchestration with a skill-first foundation so that learning decisions respond to real performance signals.

Here’s how that translates into practical capabilities:

AI-driven learning journeys

Learning paths are not fixed once they are created. As employees interact with assessments, content, and job-specific scenarios, the system adjusts sequencing and reinforcement. Someone demonstrating proficiency can move ahead faster, while those showing gaps receive deeper support. Over time, learning becomes more responsive to how people actually perform rather than how curricula were originally designed.

Skill-first architecture

Instead of organizing learning primarily around courses, Disprz structures the system around skills and competencies tied to roles. As assessments, simulations, and job performance signals accumulate, proficiency levels update continuously. This gives leaders a clearer picture of capability depth across teams rather than simply tracking course completion.

GenAI-powered assessments

Assessment creation is often one of the slowest parts of learning design. Disprz uses generative AI to build scenario-based exercises and applied learning checks that mirror real work situations. This allows teams to test decision-making and applied knowledge rather than only factual recall.

Enterprise-grade security and governance

In large organizations, AI capabilities must operate within strong governance frameworks. Disprz supports role-based access, detailed audit trails, and approval workflows so that AI-generated content and automated learning decisions remain transparent and controllable.

Proven global deployments

The platform is used by enterprises operating across regions, industries, and languages. These deployments have shaped its ability to handle distributed workforces, complex compliance requirements, and large-scale learning operations.

Measurable impact

Organizations typically evaluate Disprz on outcomes such as faster onboarding ramp-up, improved visibility into skill proficiency, and quicker reinforcement when capability gaps appear.

If you’re exploring how an AI-powered LMS could support your workforce strategy, the most practical next step is to book a personalized demo and see how the platform works in scenarios similar to your own environment.

Key Takeaways

1) AI LMS shifts learning from static course delivery to real-time capability management.

2) Personalization becomes performance-driven, not just role-based learning assignments.

3) Predictive analytics helps detect skill gaps before business performance declines.

4) Workflow integrations enable learning to trigger automatically from real operational signals.

5) True AI LMS platforms adapt continuously, not just through predefined rule-based logic.

Conclusion

AI is redefining how enterprise learning systems operate. Instead of acting as a course distribution platform, an AI LMS becomes a dynamic capability engine that detects skill gaps, adapts learning paths, and triggers interventions based on real business signals.

For organizations in 2026, the real value lies not in faster content creation but in faster capability response. Choosing the right AI LMS means selecting a platform that connects learning directly to performance, risk management, and workforce readiness.

FAQs Related to AI Learning Management System (AI LMS)

1) What is an AI learning management system?

An AI learning management system is a platform that uses machine learning and data signals to adjust how training is delivered and measured. Instead of assigning the same courses to everyone, the system analyzes assessment results, engagement patterns, and role context to personalize learning journeys.

2) How does AI improve corporate training?

AI improves corporate training by making learning more targeted and responsive. Instead of rolling out generic programs to large groups, the system identifies where individuals or teams actually struggle and delivers focused reinforcement. You also spend less time managing assignments because many workflows trigger automatically based on data signals.

3) What is the difference between LMS and AI LMS?

A traditional LMS primarily distributes courses and tracks completion. An AI LMS goes further by analyzing learning data and adjusting pathways automatically. Instead of static training programs, you get dynamic learning journeys that evolve based on assessments, engagement, and role requirements.

4) Is an AI LMS suitable for enterprise organizations?

Yes, especially if you manage a large or distributed workforce. Enterprises often deal with complex roles, frequent skill updates, and regulatory requirements. An AI LMS helps you identify capability gaps earlier and deploy reinforcement without manually managing every assignment.

5) What features should I look for in an AI-powered LMS?

You should focus on capabilities that actually change how learning operates. Look for adaptive learning engines, AI-powered content recommendations, predictive analytics, and strong integrations with HR and operational systems. Governance also matters: the platform should provide audit trails and role-based access controls.

6) How does adaptive learning work in an AI LMS?

Adaptive learning means the platform adjusts content and difficulty based on how someone performs. If you demonstrate strong understanding early, the system may skip basic modules and move you to advanced material. If gaps appear, it assigns deeper reinforcement.

7) Can AI LMS integrate with HR and CRM systems?

Yes. Most enterprise-grade AI LMS platforms integrate with HR, CRM, and operational systems so learning can respond to business events. For example, when someone is promoted in the HR system, the platform can automatically assign role-transition training. If performance data changes in CRM, targeted reinforcement can be triggered.

8) Which is the best AI LMS for corporate training in 2026?

The best AI LMS depends on your organization’s needs, scale, and integration requirements. Instead of focusing only on brand names, you should evaluate how well the platform adapts learning paths, integrates with your systems, and provides visibility into workforce capability. Platforms such as Disprz are designed with these capabilities in mind, combining AI-driven learning journeys with enterprise governance and global deployment support.